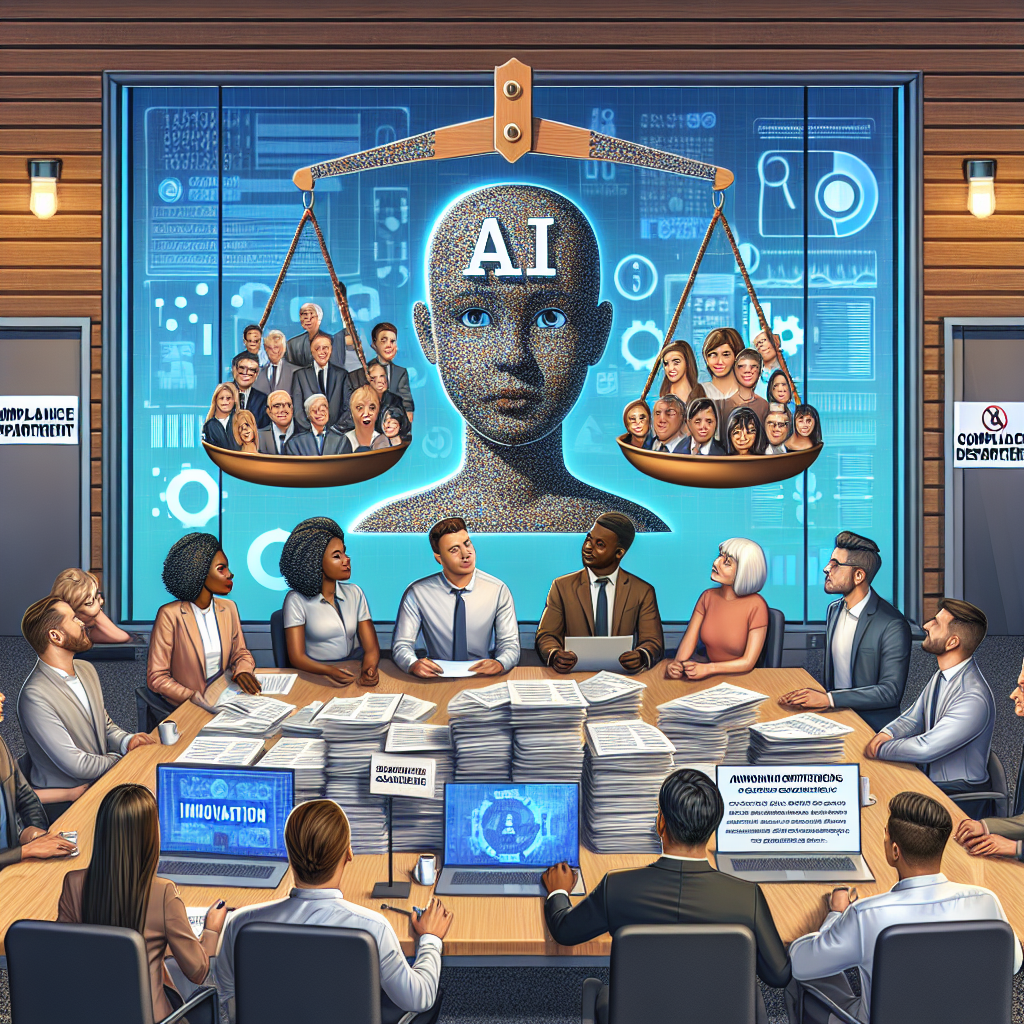

The rapid advancement of artificial intelligence technologies has prompted regulatory bodies worldwide to reevaluate existing frameworks and set new regulations. Companies at the frontier of AI are grappling with a landscape that is evolving almost as quickly as the technology itself. The conversation about AI governance is not just restricted to understanding how AI can be controlled, but also touches on ethical considerations, transparency, and accountability.

In the United States, initiatives such as the establishment of the National AI Initiative Office mark significant steps toward creating a cohesive governance framework for AI technologies. This initiative aims at launching channels of collaboration among federal agencies, industries, and academia to accelerate responsible AI use. The challenge is to cultivate an ecosystem that balances innovation with safeguards that protect consumer interests.

Real-life examples, such as the case of Facebook’s AI mishaps, underscore the importance of a comprehensive regulatory approach. Facebook's AI-based algorithms have faced scrutiny for issues such as discrimination and misinformation propagation, highlighting the incredible influence such technologies can have. To mitigate risks, regulatory bodies and AI developers are focusing on implementing frameworks to ensure ethical use.

AI companies are also prioritizing compliance with global data protection regulations. The European Union's General Data Protection Regulation (GDPR) is a benchmark in this area. It emphasizes users’ control over their data, demanding transparency in AI systems’ decision-making processes. US-based companies aiming for international markets must align with these standards, which often involves overhauling data handling practices.

The AI sector is likely to see increased demand for specialized compliance roles across firms. Compliance teams will need to address core areas such as data privacy, algorithmic fairness, and the explicability of AI systems. As the federal government and international bodies continue to roll out guidelines, AI companies that plan strategically by establishing compliance protocols early will be better positioned for long-term success.

Navigating Regulatory Challenges in AI Development Featured

This article explores the current landscape of AI regulation in the US, highlighting initiatives and challenges in creating ethical and transparent AI frameworks.

This article explores the current landscape of AI regulation in the US, highlighting initiatives and challenges in creating ethical and transparent AI frameworks.

Latest from AIML Tech Brief

- Revolutionizing Healthcare: AI Applications Enhancing Patient Outcomes

- Advances in Machine Learning: The Latest Trends Shaping the Industry

- The Rise of Edge AI: Transforming Real-Time Data Processing

- The Expanding Role of AI Applications in Accounting

- AI Applications Revolutionizing the Accounting Industry