The DBDN project began as a study of real-time pattern recognition in a video stream, and it turned into a new concept with potential that goes far beyond simple pattern recognition.

"We were not even sure whether this idea was worth anything," says Volodymyr Bykov, CEO of Ozolio Inc. "We just hypothesized that the complexity of neural pathways is as important for the learning process as the number of neural connections. If this is true, then back-propagation is just a redundant workaround that solves the problems of an incorrect neural model. Of course, this hypothesis created many challenges, but we were able to build and successfully test the first prototype."

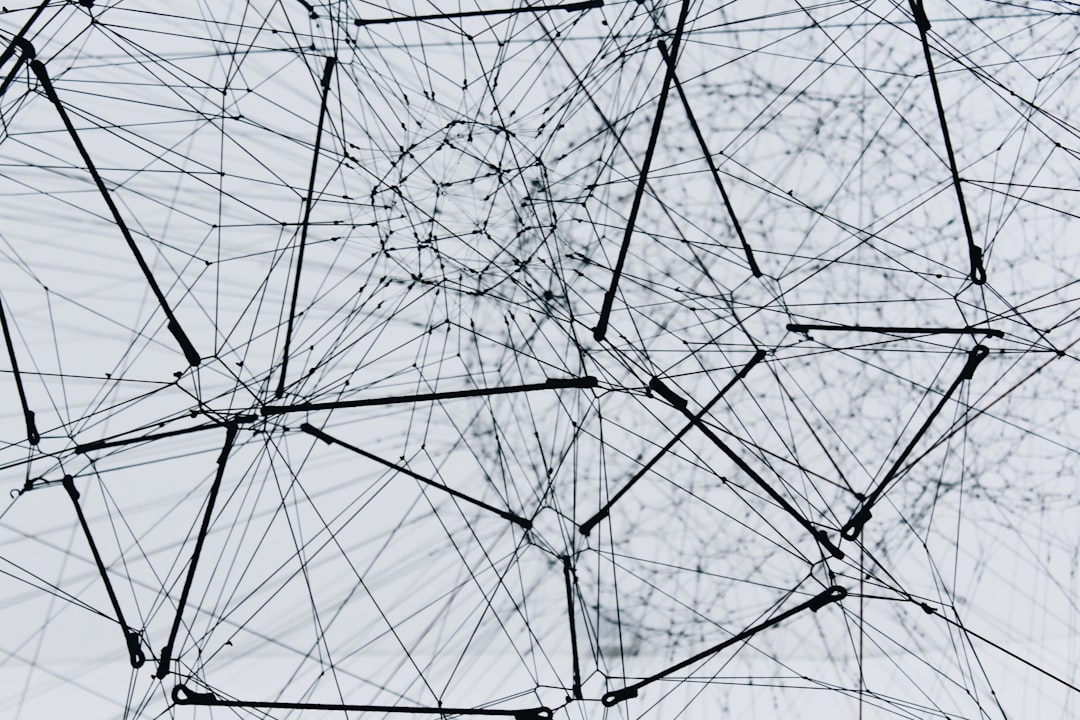

Even though DBDN can be considered as an improved version of a conventional multilayer neural network, there are several structural differences that separate DBDN from other AI models:

- DBDN treats every artificial neuron as an independent unit capable of adjusting its properties without considering the status of surrounding neurons or an entire neural network.

- The neural network forms several intersecting pathways through the same area. These pathways play a key role in the cross-referencing mechanism that essentially allows DBDN to learn, recognize, and classify patterns.

- DBDN does not use back-propagation or any other free-roaming algorithm to train and normalize the artificial neural network.

- The neural network can learn continuously, unless there is a necessity to preserve its current level of knowledge.

- The training process does not require a significant increase of resource usage or a special environment.

These and other features of DBDN can help to design autonomous hardware solutions and integrated neural circuits that do not require excessive power consumption, complex algorithms, or acceleration of any kind in order to train the neural network.

"We are still in the early stages, and the full potential of this idea is yet to be discovered," continues Volodymyr Bykov. "The first prototype is just a toy in comparison to the engine that we plan to design in the second stage. Of course, this will require more effort, investment, and development resources, but I'm sure we will make it."